Unlike in the do it yourself workflow, with RPA, you don't have to write code each time you gather new data from brand-new sources. The RPA systems generally provide built-in tools for internet scratching, which saves time and also is much easier to utilize. Websites typically include new functions as well as use architectural modifications, which bring scratching tools to a halt. This takes place when the software application is created relative to the web site code elements. One can create a few lines of code in Python to finish a big scraping task. Additionally, because Python is among the prominent programming languages, the area is really active.

10 Best RPA Tools (August 2023) - Unite.AI

10 Best RPA Tools (August .

Posted: Tue, 01 Aug 2023 07:00:00 GMT [source]

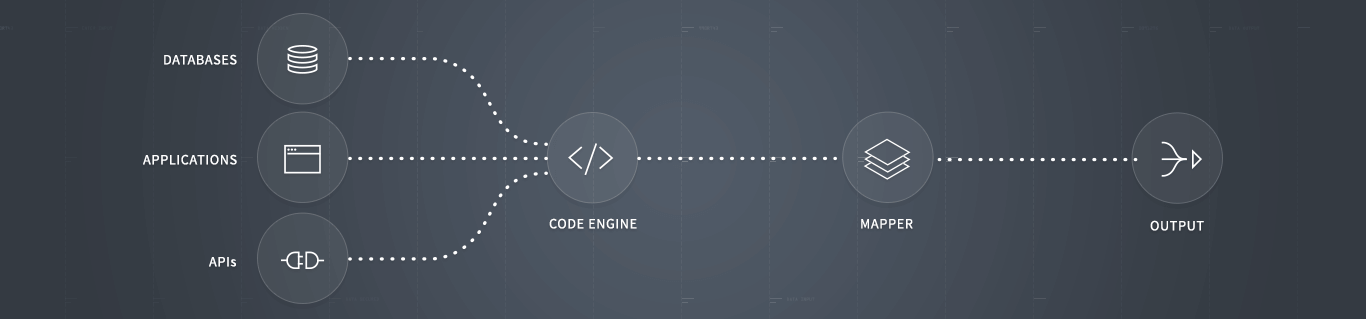

Depending upon numerous aspects, such as your company's unique needs, sources, as well as technical experience, you can use an in-house or outsourced web scraper. Prior to you can automate systems, networks, as well as applications, you need access to databases. Automate gives the devices for database access, inquiries, and also deals with all ODBC/OLE data sources. With information access, you can utilize the power of Automate's other automation tools to simplify IT and service procedures. Any type of company that deals with a high volume of information requires an extensive automation tool to connect the space in between disorganized information and organization applications. Essence as well as change your business-critical information with automated data scuffing and screen scratching.

You can re-formulate the script over to scuff all the books from all the groups and save them in various Excel files for each classification. In the code above, we initially import AutoScraper from the autoscraper library. After that, we offer the link from which we want to scuff the details in the UrlToScrap. At this moment, your Python script currently scratches the website and also filters its HTML for relevant task postings. Nonetheless, what's still missing out on is the link to obtain a task.

Internet Scrape supplies total JavaScript implementation, waiting on Ajax demands, pagination handlers, and page scroll down. Cheerio does not-- analyze the result as a web browser, generate a visual making, apply CSS, load external resources, or execute JavaScript; that's why it's so quickly. Like Puppeteer, Playwright is also an open-source library that any person can utilize free. Dramatist provides cross-browser assistance-- it can drive Chromium, WebKit, and Firefox. Octoparse gives cloud solutions as well as IP Proxy Servers to bypass ReCaptcha and also obstructing. Web Unblocker allows you expand your sessions with the exact same proxy to make numerous demands.

The Python libraries demands and also Gorgeous Soup are powerful tools for the task. If you such as to learn with hands-on instances and have a standard understanding of Python as well as HTML, after that this tutorial is for you. With ElectroNeek's web scraping device, you don't need to be an engineer to immediately gather and also process the information you need from the web. There's no need for intricate manuscript writing-- all you require to do is to show the system precisely what data you want by selecting a number of wanted components, and also the device will certainly do the rest. Typically, you can anticipate the device to remove data from a private web site in much less than a second.

Using Methods To Extract Data From The Internet

For the functions of this write-up, take into consideration nodes to be a component. Now a solitary component or a selection of elements can be picked. Nevertheless, after performing the demand, you might not get what you've anticipated.

- Advanced web scrapes are furnished to scan, or "crawl," whole web sites, including CSS and also Javascript components.

- So, before using any type of scuffing tool, individuals need to make certain that the device can comply with these http://brookssvcn846.image-perth.org/what-is-the-internet-scraping-and-also-exactly-how-it-works-dev-neighborhood basic regulations.

- A full-service web scratching carrier is a better and also a lot more economical alternative in such situations.

- Utilizing crawler software application, the fastest method to list the item website URLs of a web site is to develop an Excel data with all the links.

- There are lots of internet scratching collections available for Python, such as Scrapy and also Beautiful Soup.

Parsehub uses device learning to analyze the most intricate sites and also produces the outcome data in JSON, CSV, Google Sheets, or with API. It allows you develop an automatic testing atmosphere using the current JavaScript as well as web browser attributes. There is an innovative setting that allows the modification of an information scrape to remove target information from facility sites. Internet Unblocker provides a one-week free test for customers to test the tool. Internet Unlocker readjusts in real-time to stay undetected by crawlers constantly creating new methods to obstruct users. Web Unlocker scuffs data from websites with automated IP address rotation.

Advantages Of Automated Data Removal With Automate

The terms are often made use of reciprocally, and also both deal with the process of removing details. There are as numerous solutions as there are website online, as well as extra. This details can be a great source to build applications around, as well as understanding of writing such code can likewise be used for automated web testing.

How Web Scraping in Excel Works: Import Data From the Web - groovyPost

How Web Scraping in Excel Works: Import Data From the Web.

Posted: Wed, 28 Jul 2021 07:00:00 GMT [source]

The very best web scraping options for your company ought to have the ability to deal with CSV files since constant Microsoft Excel users know with this worth. Therefore, you can make well-considered data-driven choices on your firm's service strategy by acquiring real-time insight right into the scuffed data. As an example, you could anticipate a boost popular ETL data validation service for your service or products at a certain time by keeping an eye on the actions of your target audience. Hence, you can maintain the needed quantity of merchandise in supply to prevent scarcities as well as make certain the satisfaction of your clients.

Automated Web Scuffing-- Simple Access Of Reputable Structured Internet Scalable Data Integration Information

All info on Oxylabs Blog site is offered on an "as is" basis and for informational functions just. We make no representation as well as disclaim all obligation with respect to your use any kind of info had on Oxylabs Blog or any type of third-party web sites that may be linked therein. Before engaging in scuffing activities of any kind of kind you must consult your legal consultants and also thoroughly check out the certain website's regards to service or obtain a scratching permit. The script above uses InfoScraper to another_book_url and also publishes the scraped_data. Notice that the scratched information has some unneeded info together with the desired information. This results from the get_result_similar() approach, which returns information comparable to the wanted_list.